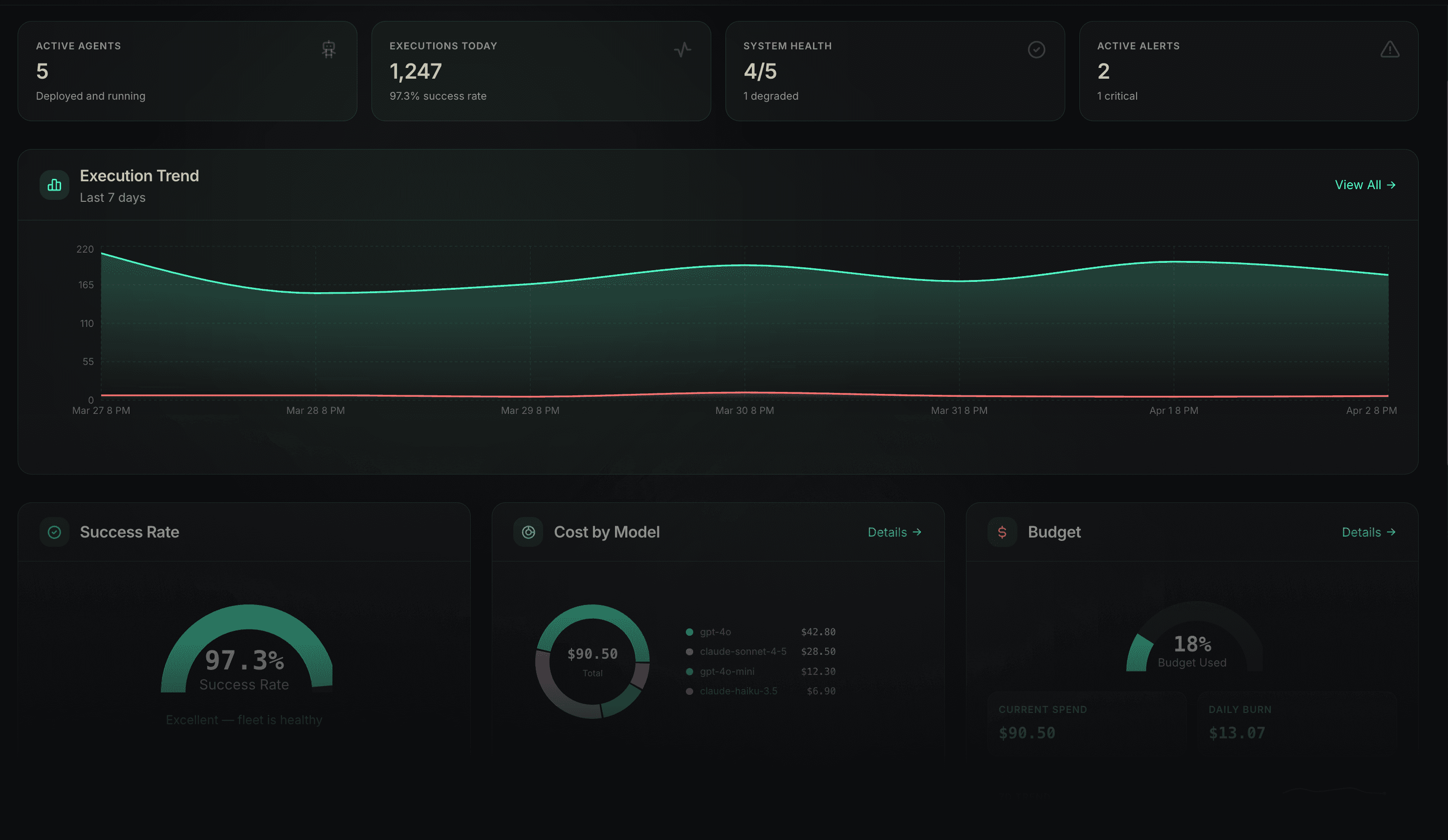

AI agents are already causing real damage.

Here's what it looks like.

Here's what it looks like.

Connect

AI Tool Coordination

MCP governance — policy checks, PII scanning, and audit trails on every tool call

Rug pull detection — alerts the moment a tool's capabilities change, before your agent acts on the new behavior

Human-in-the-loop inbox — escalation and delegation routing for approvals and interventions

Zero code, zero SDK — works with agents already running; no instrumentation required

Governs third-party agents via any MCP-compatible interface

Works with: Claude, GPT-4, Gemini, custom agents, and any MCP-compatible server.

Observe

Observability + Governance SDK

Captures every LLM call, tool invocation, and agent decision — full execution trace, not just logs

Enforces runtime policies before the next step executes — governance that acts, not just reports

50+ policy categories out of the box: Cost, Safety, Content, PII, Kill switches, Audit, and more

Auto-instrumentation — 2 lines of code, 200+ supported libraries

Works with any Python agent framework — no code changes required

Supports: LangChain, CrewAI, AutoGen, LlamaIndex, Semantic Kernel, and 12+ other frameworks.

Runtime

Governed Execution Layer

Policy enforcement native to every step — not layered on top after the fact

Durable execution — agents survive deploys, restarts, and workflows that run for hours or days

Spawn, suspend, resume, and replay any agent run — with optional prompt, model, or policy substitution

Full lineage causality graph — trace exactly which agent spawned which action and why

Isolated execution, durable checkpoints, kill switches at every level

Built for: financial automation, healthcare workflows, infrastructure operations — any workflow where wrong is expensive.

Set policies once. Waxell enforces them on every agent run, before the next step executes — at sub-millisecond latency.

Waxell instruments the frameworks your agents are built on — no rip-and-replace, no vendor lock-in.

200+ libraries auto-instrumented · OpenTelemetry-native

Works alongside your existing APM · Self-hosted or cloud (US or EU)

In the 2010s, employees bypassed IT to use Dropbox, Slack, and Google Docs. Companies scrambled to govern what they couldn't see.

Today, developers are shipping AI agents without waiting for security review. Product teams are connecting third-party AI tools that operate outside any monitoring system. Entire agentic workflows are running in production with no audit trail. The risk isn't that AI will replace your team. It's that your team is already using AI in ways you can't see, measure, or control. Waxell is governance infrastructure for the agentic era.